Business logic vulnerabilities are flaws in how an application enforces its rules and workflows. They cannot be detected by automated scanners because the exploits use syntactically valid requests with no malicious payload. Only a human tester who understands what the application is designed to do can identify when that intended behaviour is being abused.

What are business logic vulnerabilities?

A business logic vulnerability is not a flaw in the syntax of code. The application does exactly what the code instructs it to do. The problem is that the code fails to enforce the correct rules: it processes requests in the wrong order, trusts values it should verify, or allows one user to access resources belonging to another.

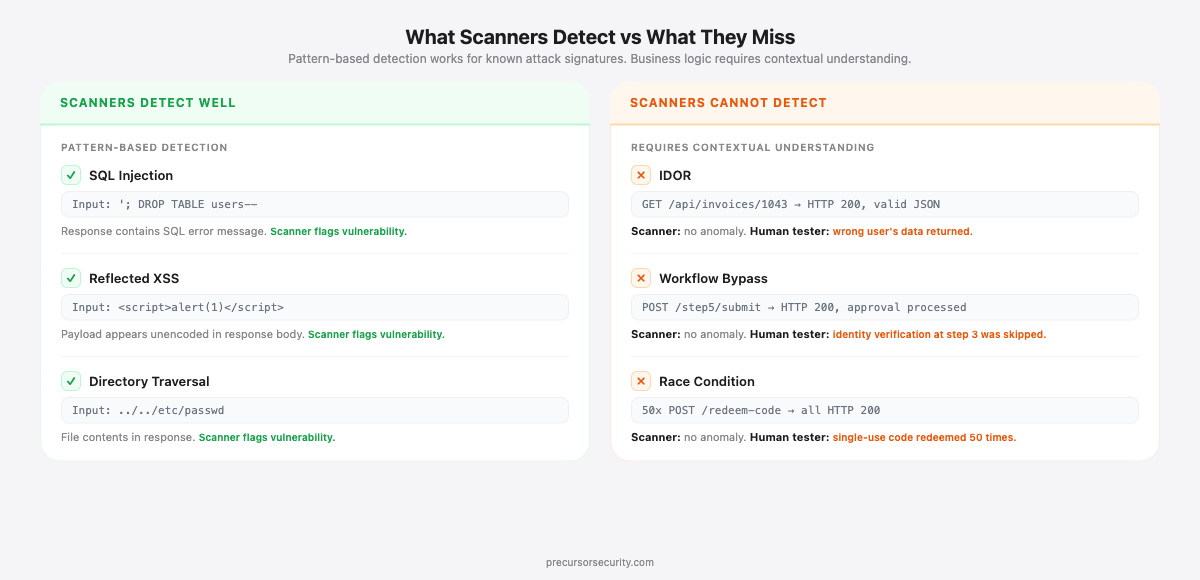

Compare this to a SQL injection attack. The attacker inserts a payload into a field that reaches a database query unsanitised. The payload is structurally anomalous. A scanner can detect it because the attack has a recognisable signature: input that looks like SQL where SQL should not appear.

Business logic flaws use entirely legitimate requests. The HTTP method is correct. The parameters are valid. The authentication token is present. The endpoint exists. The only problem is context: this user should not be performing this action, or this step should not be reachable without completing the one before it. That kind of rule exists in the business domain. It is not encoded in the HTTP specification.

OWASP formalised this category as A04:2021 Insecure Design, which entered the OWASP Top 10 for the first time in 2021. Its inclusion was the industry's acknowledgement that logic-level flaws require design-time threat modelling rather than code-level patching. The MITRE CWE catalogue classifies them under CWE-840 Business Logic Errors, with sub-weaknesses including CWE-841 (Improper Enforcement of Behavioural Workflow) for step-skipping attacks and CWE-285 (Improper Authorisation) for access control failures.

What types of business logic vulnerability do penetration testers find most often?

The BreachLock 2024 Penetration Testing Intelligence Report, which analysed over 4,000 engagements, found broken access control in 32% of high-severity findings. IDOR ranked third among the most frequently identified vulnerability types in web application testing. These are not exotic edge cases. They show up in production applications across every sector, including applications that have already been through automated scanning.

What is an Insecure Direct Object Reference (IDOR)?

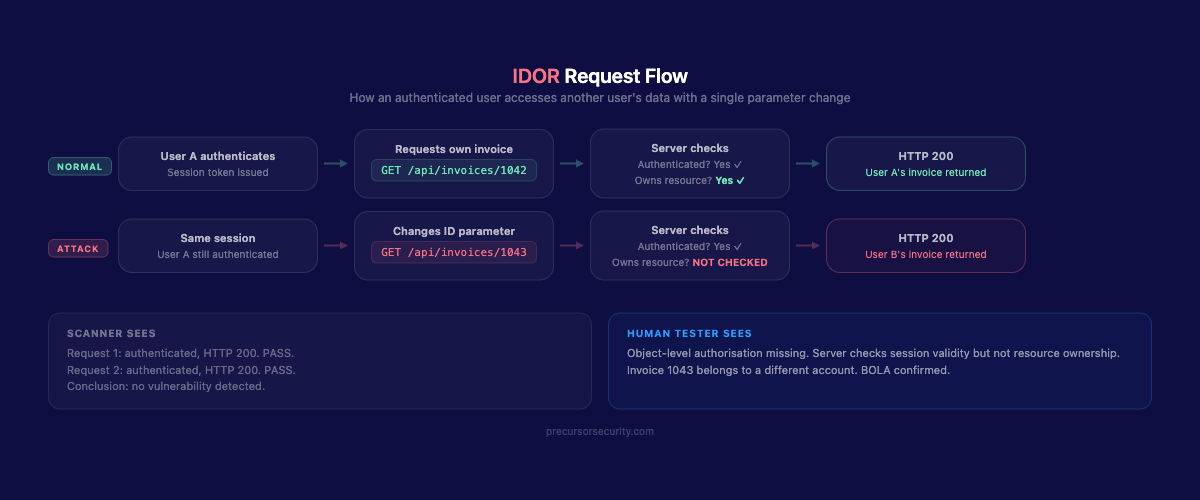

An Insecure Direct Object Reference occurs when an application exposes a reference to an internal resource, typically a numeric ID or UUID, and fails to verify that the requesting user is authorised to access it.

A user sees their invoice at GET /api/invoices/1042. They change the ID to GET /api/invoices/1043. If the application checks that the user is authenticated but not that they own invoice 1043, it returns another customer's data. The request is entirely valid technically. The scanner sees two authenticated requests to a known endpoint, both returning HTTP 200. There is nothing for it to flag.

IDOR vulnerabilities affect any resource the application exposes through a predictable or enumerable identifier: orders, accounts, files, messages, support tickets, payment methods, export functions. HackerOne receives over 200 valid IDOR reports per month across its customer programmes. Rewards for IDOR findings increased 23% year-on-year as of 2025, with valid reports up 29%. It is the access control flaw most consistently found in structured penetration testing engagements.

What is privilege escalation?

Privilege escalation splits into two types. Horizontal escalation is accessing another user's data at the same privilege level (effectively the IDOR scenario above). Vertical escalation is performing actions reserved for a higher-privilege role.

A common vertical escalation scenario: a standard user sends POST /api/users/update with role=admin in the request body. If the API trusts client-supplied role values rather than deriving the role from the authenticated session server-side, the user has just promoted their own account. Authenticated request, legitimate endpoint, no injection in the body. The flaw is that the server accepted a parameter it should have ignored entirely.

This category also covers a gap that appears frequently in API-first architectures: authorisation enforced at the UI layer but absent at the API layer. A user who cannot see the admin panel in their browser may be able to call admin endpoints directly, simply by constructing the requests manually.

What is payment and pricing manipulation?

E-commerce and subscription applications frequently pass pricing data through the client in checkout flows. If the server does not recalculate prices from its own product catalogue before charging the customer, the attacker simply modifies the value in transit.

A standard scenario: a checkout form submits the total as a request parameter. A tester intercepts the request and changes 499.99 to 0.01. The order processes. The server accepted the client-supplied price rather than looking up the authoritative figure from its own database.

Variations include quantity manipulation (a negative quantity triggers a refund credit), discount code chaining (applying multiple single-use codes to the same order), and currency parameter tampering (switching between currencies where exchange rates are applied client-side). A 2023 survey found 90% of online retailers report losing money to discount and loyalty point misuse. These exploits are often straightforward to execute. In every case, the scanner sees a valid POST to a checkout endpoint with structurally valid parameters.

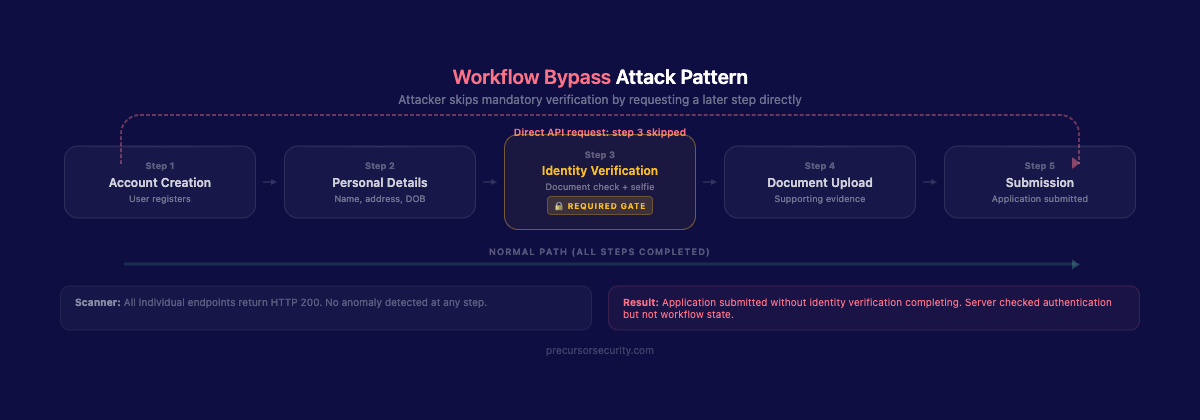

What is workflow bypass?

Multi-step processes (registration flows, loan applications, identity verification journeys, onboarding sequences) often enforce their step ordering only through the UI. The underlying API endpoints accept requests regardless of whether prior steps were completed.

A loan application requires identity verification at step 3. The approval request sits at step 5. A tester navigates directly to the step-5 endpoint and submits the final request. The application checks that the user is authenticated but not that steps 1 through 4 were completed. The application is processed without identity verification having taken place.

Workflow bypass vulnerabilities are common in any system where a mandatory gate (verification, payment, approval) sits in the middle of a sequence rather than being enforced as a hard prerequisite at every downstream endpoint independently.

What are race conditions?

A race condition exploits a timing window in which concurrent requests are processed before shared state updates. The application's check-then-act logic is sound when requests arrive sequentially. Under concurrent load, it breaks.

A promotional code is configured for single use. A tester sends 50 simultaneous redemption requests. For each request, the application asks: "has this code been used?" The answer is no for all 50, because none of the requests have yet updated the redemption flag. All 50 discounts process before any of them complete.

The same class of vulnerability applies to loyalty point systems, referral bonuses, withdrawal limits, and any operation that reads a value, acts on it, then writes back an updated state, without a transactional lock preventing concurrent execution.

What is BOLA (Broken Object Level Authorisation)?

Broken Object Level Authorisation is the API-specific formulation of IDOR. It tops the OWASP API Security Top 10 in both the 2019 and 2023 editions, where OWASP describes it as "the most commonly found and impactful API vulnerability." In API contexts, the attack surface expands because APIs expose object references directly in URLs, request bodies, and GraphQL queries. API endpoints are also typically consumed programmatically, bypassing any UI-layer controls that might otherwise obscure object references.

Why can automated scanners not detect business logic flaws?

Automated scanners send payloads with known malicious characteristics and analyse responses for recognisable error patterns: SQL error messages, reflected script content, stack traces, anomalous status codes. The logic is: send a known attack, look for a known indicator of compromise.

Business logic vulnerabilities produce none of those indicators. GET /api/invoices/1043 returns HTTP 200 with valid JSON. That is precisely what the scanner expects an invoice endpoint to return. There is nothing in the response to flag.

Three structural constraints explain why this is not a problem that can be solved by making scanners smarter:

No business domain model. The scanner does not know which user owns which resource, which steps are prerequisites for which actions, or which values the server should be calculating rather than accepting from the client. These rules are in the business domain. They are not in the HTTP specification.

Sequential architecture. Race conditions require precisely timed concurrent requests. Most scanner architectures are sequential and are not designed to detect state corruption from concurrent execution. The vulnerability window is measured in milliseconds and requires deliberate tooling to reproduce.

No state machine. Identifying a workflow bypass requires a model of the intended step sequence. To flag a step-5 submission as anomalous, the tool must know that steps 1 through 4 are prerequisites. That model does not emerge from endpoint crawling. It has to be built from application knowledge.

Astra's 2025 penetration testing trends report found nearly 2,000% more vulnerabilities discovered through manual testing compared to automated tools, specifically in APIs, cloud configurations, and chained exploits. Those are precisely the areas where business logic testing concentrates.

The table below summarises the detection gap:

| Vulnerability type | Automated scanner | Manual tester | Business impact |

|---|---|---|---|

| IDOR | Not detected | Systematic enumeration and authorisation checks | Critical |

| Vertical privilege escalation | Not detected | Role parameter testing and API-level checks | Critical |

| Payment manipulation | Rarely detected | Request interception and value substitution | High |

| Workflow bypass | Not detected | Direct endpoint access, step-skipping | High |

| Race condition | Rarely detected | Concurrent request tooling, timing analysis | High |

| BOLA (API) | Not detected | API-level object reference testing | Critical |

How are business logic attacks used in the real world?

Business logic attacks are not theoretical. Imperva's State of API Security in 2024 found that 27% of API attacks in 2023 were business logic attacks, up from 17% the year before. That is a 59% year-on-year increase. The trend reflects both the shift to API-driven architectures and growing attacker awareness that logic flaws are considerably harder to patch at scale than technical vulnerabilities.

USPS API breach, 2018. The United States Postal Service exposed data on approximately 60 million users through an API that failed to enforce object-level authorisation. Any authenticated user could query other users' profile data, including email addresses, home addresses, and phone numbers, by modifying user ID parameters in API requests. Every request returned HTTP 200 with valid JSON. The vulnerability produced no detectable indicators for automated scanning and went unreported for over a year before a security researcher disclosed it.

Pre-launch e-commerce IDOR: anonymised case. During a web application penetration test before a retail platform went live, a tester found a critical IDOR in the order management API. The /api/orders/{orderId} endpoint validated authentication but not ownership. Enumerating sequential order IDs returned full order details for every user in the system: name, delivery address, card last four digits, order contents. The application had passed automated DAST scanning before the engagement. The flaw had existed across the entire order history since the beta period, meaning hundreds of test orders containing real customer data were exposed. The fix required server-side object-level authorisation at every order endpoint before launch. Without the manual engagement, the application would have gone live with a GDPR-reportable exposure embedded in its core checkout workflow.

Will penetration testers be replaced by AI?

This question comes up frequently, particularly as AI-assisted DAST tools improve and vendors market "autonomous security testing." For business logic testing specifically, the answer is no.

AI and automation are improving at pattern recognition. Modern tools identify known vulnerability signatures faster and more consistently than manual review. For vulnerabilities with recognisable patterns, they genuinely help: repetitive checks at scale, large HTTP traffic volumes processed quickly, time saved on tasks that do not require judgement.

Business logic testing is a different problem. AI-assisted DAST tools can learn from observed traffic and flag requests that deviate from that baseline. That is not the same as understanding authorisation rules. A tool trained on application traffic learns what requests the application receives. It cannot distinguish between a legitimate support admin viewing another user's record and an attacker doing the same thing. Context, role, and intent are not present in an HTTP request.

What testers bring is contextual reasoning. They read the application as a user first, understand the intended workflows, then probe what happens when those workflows are circumvented. They chain findings: an IDOR that exposes a user ID combined with a privilege escalation that accepts client-supplied roles combined with a workflow bypass that skips verification. Three low-to-medium severity findings chain into a single critical. A scanner testing endpoints in isolation will not assemble that chain.

AI will make testers more productive at the work that has always been automatable. For a web application penetration test that covers business logic, it will not replace the human tester doing the work.

How do CREST-accredited testers approach business logic testing?

Manual web application penetration testing against business logic is structured around the application's workflows, not just its endpoint inventory.

The first stage is mapping. Testers work through the application as a legitimate user, documenting every workflow, every state transition, and every point at which the application makes a trust decision: accepting a value from the client, granting access to a resource, advancing a user to the next step in a sequence.

The second stage is trust boundary analysis. For each decision point, the tester asks what happens when the boundary is violated. What if the user supplies a different ID? What if they skip a step? What if they submit a role parameter? What if they send this request from two sessions simultaneously?

The third stage is systematic testing. Each trust boundary is tested in isolation and in combination. Horizontal access at every resource endpoint. Vertical access by manipulating role and permission parameters. Workflow sequences tested out of order and with steps omitted. State-changing operations tested under concurrent load.

This takes time. It requires reading the API documentation, understanding the data model, and forming an accurate picture of what the application is designed to prevent. It is also the highest-value portion of any web application engagement, precisely because automated tooling cannot replicate it.

The contrast with SAST, DAST, and automated scanning is not one of speed or thoroughness. It is structural. Automated tools test for what they know to look for. Manual testing asks what the application should prevent, which is a fundamentally different question.

How does business logic testing differ from what automated tools and WAFs provide?

Automated scanning and web application firewalls address a different threat model. A WAF inspects request payloads for malicious signatures: injection strings, known exploit patterns, anomalous encoding. It blocks attacks that look like attacks.

Business logic vulnerabilities produce requests that look entirely normal. A WAF sees an authenticated POST to a checkout endpoint with structurally valid parameters. There is nothing to block. As our guide to WAF limitations and bypass techniques covers in detail, perimeter controls cannot compensate for authorisation gaps inside the application.

The same limitation applies across the categories in the OWASP Top 10. OWASP A01:2021 Broken Access Control has topped the list for three consecutive editions, and it encompasses IDOR and BOLA specifically because these are the flaws automated tooling consistently fails to surface. The fix is server-side authorisation logic, verified manually at every endpoint.

Frequently asked questions

What is the most common business logic vulnerability?

Insecure Direct Object References (IDOR) are consistently the most frequently identified business logic flaw in web application penetration testing. They appear in virtually every application that exposes resources through enumerable identifiers and does not enforce object-level authorisation at every endpoint. The underlying cause is almost always the same: authorisation logic is implemented at the route or controller level rather than enforced per-object in every data query. The OWASP API Security Top 10 lists the API-equivalent (BOLA) as the number one API vulnerability.

Can a bug bounty programme find business logic flaws?

Yes, but coverage is inconsistent. Bug bounty programmes attract researchers focused on high-reward targets: the highest-severity vulnerabilities in the highest-traffic workflows. An IDOR in a core billing API is likely to be found quickly. An IDOR in a lower-traffic admin export function, or a race condition in a promotional code endpoint, may go unreported for months.

A structured penetration test covers all application workflows systematically, regardless of expected reward. It will find the edge-case logic flaw in the password reset flow and the workflow bypass in the document approval sequence that a bounty researcher deprioritised. For organisations that need to demonstrate comprehensive coverage to auditors, a structured engagement is more appropriate than relying on a programme with variable depth.

How do you prevent business logic vulnerabilities?

Several practices significantly reduce exposure. Enforce all business rules server-side: never rely on the client to supply or validate values such as prices, roles, workflow state, or discount eligibility. Implement object-level authorisation checks at every endpoint that accesses a resource, not just at the authentication boundary. Use server-side sessions to track workflow progress through multi-step processes, and validate at each step that all prior steps were completed in the correct sequence. For state-changing operations that must not execute concurrently, use database transactions with appropriate isolation levels or distributed locks. Review API endpoints independently of the UI flows that call them: assume every endpoint is directly accessible to an attacker who will send any parameter combination they choose. Threat model during design, not after deployment.