The OWASP Top 10 is a ranked list of the ten most critical web application security risk categories, published by the Open Worldwide Application Security Project. The 2025 edition covers Broken Access Control, Security Misconfiguration, Software Supply Chain Failures, Cryptographic Failures, Injection, Insecure Design, Authentication Failures, Data Integrity Failures, Logging and Alerting Failures, and Mishandling of Exceptional Conditions. Each category contains multiple distinct attack techniques.

What is the OWASP Top 10 and where does it come from?

OWASP (Open Worldwide Application Security Project) is a non-profit foundation that produces free security guidance used by developers, security teams, and penetration testers worldwide. The OWASP Top 10 is its most widely cited document: a data-driven ranking of the ten most critical web application security risk categories.

The 2025 edition is the latest release, succeeding the 2021 version. Each category is scored on a combination of factors: how often the vulnerability class appears in tested applications, how exploitable it is, how well automated tools detect it, and what the technical impact looks like when it is exploited.

The important word is "categories." The OWASP Top 10 is not a list of 10 bugs. Each entry is a risk class that contains dozens of distinct attack techniques. A01 Broken Access Control alone encompasses insecure direct object reference, privilege escalation, JWT manipulation, forced browsing, and CORS misconfiguration. Understanding what testers actually do within each category is what separates a genuine assessment from an automated scan that produces a PDF.

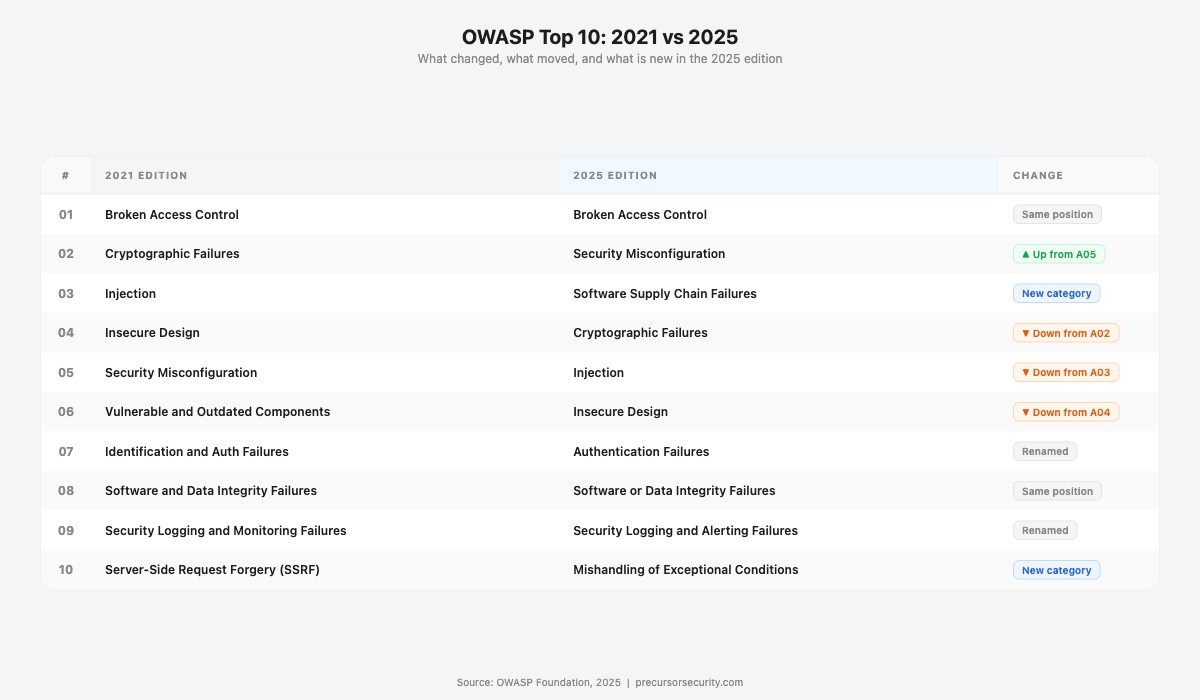

The 2025 revision introduced two new categories: Software Supply Chain Failures (A03:2025, replacing Vulnerable and Outdated Components) and Mishandling of Exceptional Conditions (A10:2025, replacing SSRF). Security Misconfiguration moved up to second place from fifth. Injection dropped from third to fifth. Broken Access Control has remained at the top since 2021, reflecting how frequently access control failures appear in real breach data.

How do penetration testers use the OWASP Top 10?

The OWASP Top 10 functions as a baseline, not a methodology. For the detailed test procedures, testers use the OWASP Web Security Testing Guide (WSTG) v4.2, which contains 91 individual test cases mapped across 11 categories. Each test case has specific pass/fail criteria and a defined procedure.

CREST (Council of Registered Ethical Security Testers) certification references OWASP WSTG procedures directly. The CREST Certified Registered Tester (CRT) examination covers input validation testing, authorisation testing, session management, cryptographic assessment, and configuration review: all OWASP-mapped domains. Organisations holding CREST accreditation provide assurance that their testers have been assessed against these standards.

In practice, a tester working through a web application penetration test uses the OWASP Top 10 to structure coverage, WSTG v4.2 for specific test procedures, and their own judgment to extend beyond both when the application's architecture warrants it.

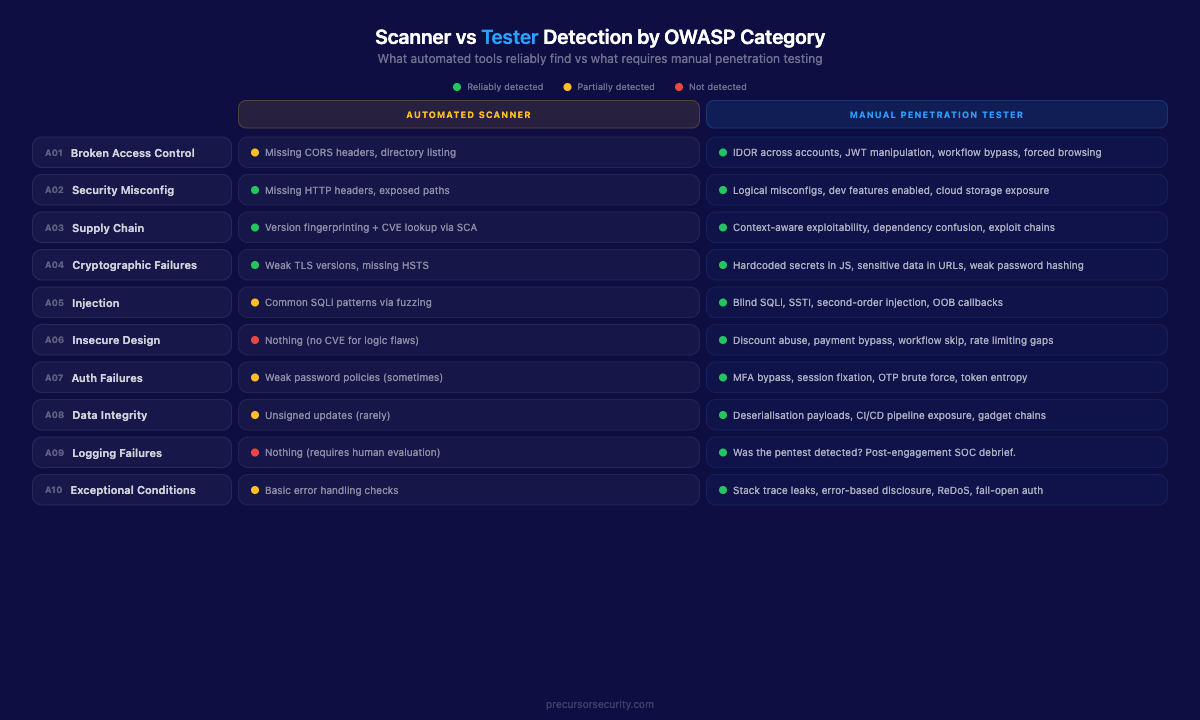

The table below shows the core split between what automated tools reliably find and what manual testing adds in each category. This distinction is the reason penetration testing exists.

| OWASP Category (2025) | What scanners reliably detect | What testers find that scanners miss | Tester technique |

|---|---|---|---|

| A01: Broken Access Control | Missing CORS headers, directory listing | IDOR between accounts, JWT claim manipulation, workflow bypass, admin endpoint forced browsing | Manual parameter substitution, role-switching between test accounts |

| A02: Security Misconfiguration | Missing HTTP headers, exposed admin paths | Logical misconfigurations, enabled dev features, verbose stack traces, cloud storage exposure | Manual config review, default credential testing |

| A03: Software Supply Chain Failures | Version fingerprinting + CVE lookup via SCA | Whether the CVE is exploitable given the specific deployment, exploit chains between components, malicious package detection | Context-aware CVE triage, dependency audit, manual exploit chain analysis |

| A04: Cryptographic Failures | Weak TLS versions, missing HSTS | Hardcoded secrets in JS bundles, sensitive data in URLs, weak hashing in grey-box source review | testssl.sh, manual JS inspection, grey-box source analysis |

| A05: Injection | Common SQLi patterns via automated fuzzing | Blind SQLi (time-based, OOB), SSTI, LDAP injection, second-order injection | Manual payload crafting, out-of-band callbacks, Burp Collaborator |

| A06: Insecure Design | Nothing (no CVE exists for logic flaws) | Discount abuse, negative quantity manipulation, payment flow bypass, rate limiting absence | Workflow mapping, business logic abuse, volumetric request testing |

| A07: Authentication Failures | Weak password policies (sometimes) | MFA bypass via response manipulation, session fixation, OTP brute force, token entropy issues | Active session interception, manual MFA flow testing |

| A08: Software or Data Integrity Failures | Unsigned update mechanisms (rarely) | Deserialisation payloads tailored to the target platform, CI/CD pipeline exposure | Platform-specific gadget chains, pipeline config review |

| A09: Security Logging and Alerting Failures | Nothing (requires human evaluation) | Whether the test itself was detected by the client's monitoring | Post-engagement detection debrief with client SOC |

| A10: Mishandling of Exceptional Conditions | Basic error handling checks | Unhandled exceptions leaking stack traces, error-based information disclosure, denial of service via malformed input | Edge case fuzzing, malformed request testing, error response analysis |

What does A01 Broken Access Control mean for a penetration tester?

Broken Access Control has been the top-ranked OWASP category since 2021, and for good reason. OWASP data shows it appears in 94% of applications that have been tested. It encompasses any failure to consistently enforce what authenticated users are and are not permitted to do.

The first technique is Insecure Direct Object Reference (IDOR) testing, documented under WSTG-ATHZ-04. A tester enumerates object identifiers in requests and observes whether the application enforces ownership. A URL like /invoice/4821 becomes /invoice/4820. A userId parameter in a POST body gets swapped to another account's ID. If the application returns data or performs an action it should not, access control has failed.

Vertical privilege escalation is tested next: can a standard user reach admin-only endpoints by navigating directly to them, or by manipulating role claims in tokens? Where JWT (JSON Web Token) authentication is in use, the tester inspects the token payload, modifies claims such as "role": "admin", and re-signs or strips the signature to check whether the application validates it.

Forced browsing rounds out the category: accessing paths not linked in the UI but present on the server, such as /admin, /backup, /api/internal/users, or /debug. The 2022 Optus breach is a documented real-world example of A01 at scale: an unauthenticated API endpoint accepted sequential customer ID parameters, returning different customers' records with each integer increment. Approximately 9.8 million records were exposed. An automated scanner would not have caught it, because the endpoint returned valid 200 responses to every request.

Scanners cannot understand data ownership or business logic. They do not know that orderId=5012 belongs to a different account. A human tester does.

What does A02 Security Misconfiguration look like in a real assessment?

Security Misconfiguration moved from fifth place in 2021 to second in 2025, reflecting how frequently configuration failures appear in real assessments. Unlike code vulnerabilities, this category captures operational failures that can occur at any layer of the stack and at any point in the application lifecycle.

Default credentials on administrative interfaces are tested first. Frameworks, content management systems, databases, and infrastructure components often ship with published default credentials that are never changed. A tester with access to a list of common defaults can try them in minutes.

The tester checks for enabled features with no legitimate purpose in production: debug endpoints, developer consoles (/debug, /actuator, /phpinfo.php), sample applications, and administrative interfaces exposed to the internet.

HTTP security headers are reviewed systematically: Content-Security-Policy, X-Frame-Options, X-Content-Type-Options, Strict-Transport-Security, Permissions-Policy. Missing or misconfigured headers enable secondary attack vectors. Absence of X-Frame-Options allows clickjacking. A weak or absent Content-Security-Policy allows XSS escalation.

Verbose error messages that reveal stack traces, database connection strings, internal file paths, or framework versions provide attackers with reconnaissance data. Directory listing on web roots exposes source files, configuration files, and backup archives that were never intended to be public.

Where cloud infrastructure is in scope, misconfigured storage (publicly accessible S3 buckets, Azure Blob containers, or GCS buckets with anonymous read enabled) falls here.

What is A03 Software Supply Chain Failures and how do testers assess it?

Software Supply Chain Failures is new in the 2025 edition, replacing the narrower "Vulnerable and Outdated Components" from 2021. The expanded scope covers not just known CVEs in dependencies but also the integrity of the entire software supply chain: malicious packages, compromised build pipelines, and dependency confusion attacks.

Version enumeration remains the starting point. Response headers, error messages, and JavaScript files frequently disclose framework and library versions. The tester builds a component inventory and cross-references against the National Vulnerability Database (NVD) and vendor security advisories.

Where a vulnerable version is identified, the critical question is: is the CVE actually exploitable given this deployment? A Remote Code Execution (RCE) CVE in an internal library not reachable through the application's attack surface carries a different risk rating than the same CVE in a publicly facing component. Software Composition Analysis (SCA) tools flag the CVE either way. A penetration tester assesses exploitability in context.

The 2025 category also covers dependency confusion, where an attacker publishes a malicious package with the same name as an internal private package to a public registry. Build systems configured to check public registries first will pull the malicious version. Lockfile integrity, registry configuration, and package verification are reviewed where build pipeline access is in scope.

Exploit chains deserve specific attention: a CVE in one component that enables or amplifies access to a weakness in another. A vulnerable dependency used in authentication processing carries higher risk than the same CVE in a logging library with no external inputs.

What does A04 Cryptographic Failures mean in a pentest?

Cryptographic Failures moved from second place in 2021 to fourth in 2025. It encompasses failures in how data is protected in transit and at rest.

TLS configuration is tested using testssl.sh or SSLyze. The tester checks for weak cipher suites, deprecated protocol versions (TLS 1.0, TLS 1.1), missing HSTS (HTTP Strict Transport Security) headers, and certificate validity issues.

For data at rest, grey-box assessments include source code review to check how passwords are stored. Weak hashing algorithms applied to passwords, MD5 or SHA-1 with no salt, are a critical finding. Any organisation using these algorithms has passwords that can be cracked offline against rainbow tables in hours.

Hardcoded credentials or API keys in JavaScript bundles, HTML comments, or client-accessible configuration files are also in scope. Frontend JavaScript, even when minified, is readable. Testers search for patterns that suggest secrets: apiKey, password, token, secret, aws_access_key_id. Sensitive data appearing in URL parameters (which end up in server logs, browser history, and referrer headers) is flagged under this category.

A scanner handles TLS configuration reliably. Finding hardcoded secrets in minified JavaScript or identifying sensitive data leaking through URL parameters requires manual review.

How do penetration testers test for injection vulnerabilities (A05)?

Injection dropped from third place in 2021 to fifth in 2025, though it remains one of the most consequential categories when exploited. A05 covers SQL injection, OS command injection, LDAP injection, XPath injection, cross-site scripting (XSS), and Server-Side Template Injection (SSTI).

For SQL injection, the tester identifies all input vectors: form fields, URL parameters, HTTP headers, cookies, JSON body parameters. Each is tested with payloads designed to break query syntax. Classic error-based injection produces database errors in the response. Blind time-based injection uses payloads like '; IF(1=1) WAITFOR DELAY '0:0:5'-- and measures response time. Out-of-band (OOB) injection uses Burp Collaborator or interactsh to detect DNS or HTTP callbacks that confirm execution without any visible response change.

OS command injection targets parameters that interact with server-side processes: filenames, IP address inputs in network utilities, any field that might be passed to a shell. A tester probing a ping utility might inject ; id or | whoami and observe whether the response contains system user information.

SSTI (Server-Side Template Injection, WSTG-INPV-18) is particularly severe. Template engines like Jinja2, Twig, Freemarker, and Velocity process expressions in server-side templates. When user input is rendered inside a template without sanitisation, injecting {{7*7}} and receiving 49 in the response confirms the engine is evaluating expressions. From there, successful exploitation frequently leads to remote code execution on the application server.

For cross-site scripting, testers probe for reflected, stored, and DOM-based variants across all user-controlled output points.

Blind injection produces no visible error. The application responds normally while executing injected logic. Detecting it requires out-of-band infrastructure and human judgment to interpret ambiguous timing signals.

For a detailed breakdown of SQL injection types and testing methodology, see the dedicated guide on SQL injection.

What is Insecure Design (A06) and why is it impossible to scan for?

Insecure Design was added to the OWASP Top 10 in 2021 (as A04) and moved to A06 in 2025. It explicitly names a category that automated tools cannot test. It captures flaws in business logic and application workflow: cases where the application does exactly what it was coded to do, but the code fails to enforce correct business rules.

Business logic flaws require the tester to understand what the application is supposed to do, then look for ways to make it do something else. The techniques:

Workflow bypass: Multi-step processes often rely on the client progressing linearly. A payment flow with steps 1 (cart), 2 (checkout), 3 (payment), 4 (confirmation) might only enforce the business rule at step 3. A tester who jumps directly to step 4's endpoint, bypassing payment entirely, tests whether the server enforces the sequence server-side or trusts the client's progression.

Quantity and value manipulation: Sending a negative item quantity, a zero-price override, or a value below a minimum threshold tests whether the application validates numeric inputs against business rules rather than just data type.

Discount code abuse: Applying a discount code multiple times, applying it after the discount period, or stacking codes designed to be mutually exclusive tests whether one-use restrictions are enforced server-side.

Rate limiting absence: Submitting thousands of OTP codes, password reset attempts, or login requests to observe whether the application responds identically on the thousandth attempt as the first. Where no rate limiting exists, brute force becomes practical.

This category is explored in depth in the companion post on business logic vulnerabilities and what scanners miss. A scanner has no concept of intended behaviour or business context. A04 is where penetration testing most clearly separates from vulnerability scanning.

What A07 Authentication Failures do penetration testers look for?

Authentication failures cover weaknesses in how the application establishes and maintains user identity. Verizon's 2024 Data Breach Investigations Report identifies stolen or brute-forced credentials as the leading initial access vector across all confirmed breaches globally.

Brute force resistance: Testers send a volume of requests to login endpoints, password reset flows, and OTP verification points. The application should engage account lockout, CAPTCHA, or rate limiting. Where it does not, credential stuffing and online brute force become practical attacks.

Session management (WSTG-SESS-01 through SESS-08): Session tokens must be long, randomly generated, and invalidated on logout. Session fixation vulnerabilities, where an attacker can set a known session ID before authentication completes and that token is accepted post-login, are probed by injecting a known value and observing post-authentication behaviour.

MFA bypass: Where multi-factor authentication (MFA) is in scope, testers probe response manipulation (intercepting a failed MFA check and modifying the response code from 401 to 200), OTP code reuse (checking whether a used time-based code remains valid within its window), and brute forcing 6-digit numeric codes where no rate limiting exists. These techniques require an active session and manual request interception. Automated scanners cannot replicate them.

Password policy review: Minimum length, complexity requirements, and whether the application prevents reuse of recently used passwords. Short maximum password limits (which truncate passphrases) are a secondary finding that often indicates poor backend storage practices.

Why are A08 Software and Data Integrity Failures difficult to test?

This category covers scenarios where code or data is consumed without adequate integrity verification. It includes insecure deserialisation and supply chain risks in the CI/CD pipeline.

Insecure deserialisation: Tested wherever the application accepts serialised objects: cookies containing serialised data, API parameters, file upload endpoints. The tester identifies the serialisation format (Java, PHP serialise format, Python pickle, .NET BinaryFormatter) and attempts to inject a crafted payload that executes code during deserialisation. Tools like ysoserial generate gadget chain payloads for Java deserialisation targets; out-of-band detection via Burp Collaborator or interactsh confirms blind execution.

Unsigned update mechanisms: Applications that load plugins, updates, or configuration from external sources without verifying digital signatures allow an attacker with network control or the ability to manipulate the source to substitute malicious content.

CI/CD pipeline exposure: Hardcoded secrets in pipeline configuration files, overly permissive repository access controls, or pipeline configurations that can be influenced by code contributed via pull requests from external accounts fall under this category. The tester reviews pipeline configuration where it is in scope.

Generic scanners produce high false-positive rates on deserialisation because payload detection requires knowledge of the target platform's specific serialisation implementation.

How do penetration testers evaluate A09 Security Logging and Monitoring Failures?

A09 is the one category where the test itself is the evidence. Inadequate logging and monitoring means attacks go undetected, and the window between initial compromise and discovery extends from hours to months.

After completing the assessment, the tester asks: was any of this activity detected? If the client's security team or Security Operations Centre (SOC) received no alerts during a week of active web application testing involving systematic parameter fuzzing, authentication probing, and privilege escalation attempts, that absence is a finding in its own right.

The tester checks whether authentication events are logged, whether failed login attempts generate alerts, and whether anomalous request patterns appear anywhere in application or server logs. Log entries should capture sufficient context: timestamp, source IP address, user account, action taken, and outcome. Logs that capture only errors, not successful authentications or access control decisions, are insufficient.

Where testers also conduct network-layer testing or work within a broader purple team engagement, detection coverage can be assessed systematically. Whether the monitoring infrastructure observed the pentest is directly predictive of whether it would observe a real attacker using equivalent techniques.

What is A10 Mishandling of Exceptional Conditions?

Mishandling of Exceptional Conditions is new in the 2025 edition, replacing SSRF (which is now considered a sub-class of A02 Security Misconfiguration or tested as part of injection and access control categories). A10:2025 covers what happens when an application encounters unexpected input, resource exhaustion, or error states and fails to handle them securely.

Unhandled exceptions leaking information. When an application throws an unhandled exception, the default error page frequently reveals stack traces, framework versions, database connection strings, internal file paths, and configuration details. This reconnaissance data accelerates subsequent attacks. A tester submits malformed input (unexpected data types, oversized values, null bytes, Unicode edge cases) to every input point and examines what the error responses contain.

Error-based information disclosure. Beyond stack traces, different error responses for different failure conditions can reveal application logic. A login endpoint that returns "user not found" for invalid usernames and "incorrect password" for valid usernames with wrong passwords enables username enumeration. A file access endpoint that returns a different HTTP status code for "file exists but access denied" versus "file does not exist" reveals the file system structure. Testers systematically compare error responses to extract information the application was not designed to share.

Denial of service via malformed input. Inputs designed to trigger resource-intensive error handling paths can cause denial of service without volumetric attacks. An XML payload with recursive entity expansion (billion laughs attack), a regular expression payload that triggers catastrophic backtracking (ReDoS), or a deeply nested JSON structure that exhausts the parser's stack are all A10 territory. The tester probes for these conditions to determine whether the application degrades gracefully or fails open.

Exception handling that fails open. The most dangerous pattern: an error in the authentication or authorisation path that causes the application to default to "allow" rather than "deny." If a permissions check throws an exception and the catch block does not explicitly deny access, the user proceeds as if authorised. Testers deliberately trigger error conditions in authentication flows and observe whether the application maintains its security posture under failure.

Note: SSRF (Server-Side Request Forgery) remains a tested vulnerability class in every web application penetration test. Its removal from the Top 10 as a standalone category does not reduce its importance. Testers continue to probe for SSRF wherever the application accepts URLs, fetches remote content, or interacts with internal services. It is now assessed under A02 Security Misconfiguration or as part of the broader injection and access control testing.

What does the OWASP Top 10 miss?

OWASP explicitly states that the Top 10 is an awareness document, not a testing methodology. The risk for organisations that scope penetration tests exclusively against the OWASP Top 10 is that they create a false sense of coverage over a wider attack surface.

Race conditions: Concurrent request attacks exploit non-atomic operations. When a balance check and a debit operation are not wrapped in a transaction, parallel requests can each pass the check and each execute the debit. This is not covered by any OWASP Top 10 category. It requires custom concurrency testing.

WebSocket vulnerabilities: Cross-site WebSocket hijacking and authentication bypass via protocol upgrade are distinct attack vectors. A WebSocket connection that inherits session authentication without re-validating credentials is exploitable by a malicious page that initiates a cross-origin WebSocket connection. OWASP WSTG has a dedicated section (WSTG-CLNT-10) but this is outside the Top 10 framing.

GraphQL-specific issues: Introspection queries that expose the full schema, field-level authorisation bypass, query depth exhaustion, and batching attacks enabling enumeration at scale require GraphQL-specific testing procedures. A01 covers the resulting access control failure; the test technique is entirely different.

OAuth 2.0 and OIDC implementation flaws: Open redirect exploitation in the redirect_uri parameter, missing state parameter CSRF protection, and implicit flow token interception are OAuth-specific attack classes that require dedicated testing procedures beyond A07.

Chained attack paths: Where three individually low-severity findings (an information disclosure, a CORS misconfiguration, and a stored XSS) combine into a critical exploit chain requiring no user interaction beyond visiting a malicious page. A Top 10 checklist approach files each finding separately. A skilled tester traces the chain.

A thorough web application penetration test uses the OWASP Top 10 as a baseline and extends coverage based on the application's architecture, technology stack, and the data it handles. The OWASP Top 10 defines the floor.

Frequently asked questions

What is the OWASP Top 10? The OWASP Top 10 is a ranked list of the ten most critical web application security risk categories, published by the Open Worldwide Application Security Project. The current edition was released in 2025, succeeding the 2021 version. It is used by penetration testers as a baseline for web application security assessments. Each entry is a risk category, not a single vulnerability. A01 Broken Access Control alone encompasses IDOR, privilege escalation, JWT manipulation, and forced browsing.

Is the OWASP Top 10 a checklist for penetration testing? No. OWASP explicitly describes the Top 10 as an awareness document. Professional penetration testers use the OWASP Web Security Testing Guide (WSTG) v4.2 for test procedures, which contains 91 individual test cases. The OWASP Top 10 defines the risk categories; WSTG defines how to test for them. Organisations that scope a penetration test exclusively against the Top 10 may miss race conditions, WebSocket vulnerabilities, OAuth implementation flaws, and chained attack paths.

Which OWASP Top 10 category is most commonly found in pentests? Broken Access Control (A01) is the most prevalent category, appearing in 94% of tested applications in OWASP's dataset, and consistently tops real-world pentest findings. IDOR vulnerabilities, a sub-class of A01, are also consistently the top finding by volume in bug bounty programmes. Security Misconfiguration (A02 in the 2025 edition) is the second-most prevalent.

Can an automated scanner test all OWASP Top 10 categories? No. Scanners reliably cover parts of A04 (TLS configuration), A02 (missing HTTP headers), and A03 (known CVEs via version fingerprinting). They cannot test A06 Insecure Design at all, because no CVE exists for business logic flaws. A09 Logging and Alerting Failures requires a human to evaluate whether the assessment itself was detected. A01 IDOR, A07 MFA bypass, and A10 Mishandling of Exceptional Conditions all require manual techniques. The categories that cause the most damage (access control and business logic) are the ones automated tools cover least well.

How often is the OWASP Top 10 updated? Approximately every three to four years. The 2025 edition is the latest, succeeding the 2021 version. The 2025 revision introduced two new categories: Software Supply Chain Failures (A03, expanding beyond the previous Vulnerable and Outdated Components) and Mishandling of Exceptional Conditions (A10, replacing SSRF as a standalone category). OWASP also maintains separate lists for APIs (OWASP API Security Top 10, updated in 2023) and mobile applications (OWASP Mobile Top 10, updated in 2024).