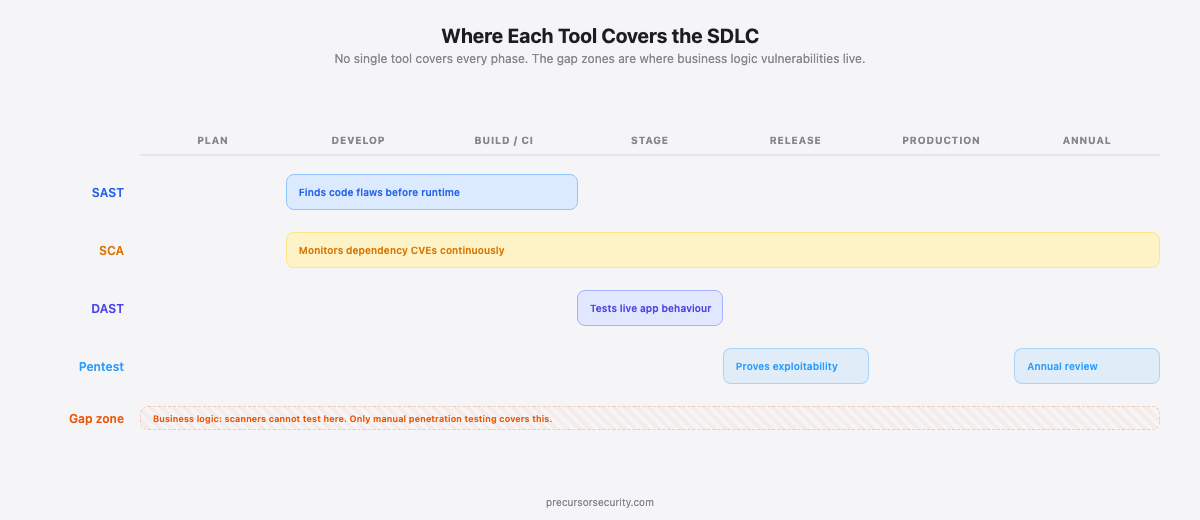

SAST (Static Application Security Testing) scans source code for vulnerabilities before the application runs. DAST (Dynamic Application Security Testing) probes a live application from the outside to find runtime vulnerabilities. SCA (Software Composition Analysis) checks third-party dependencies for known CVEs. Penetration testing is a manual, expert-led assessment that chains findings together and tests what automated tools cannot reach: business logic flaws, authentication bypasses, and real-world attack paths. Web application security testing requires all four layers working together.

What is SAST?

Static Application Security Testing analyses your source code, bytecode, or binaries without executing the application. It integrates directly into your development pipeline and flags vulnerabilities as code is written, before any line ships to production.

SAST tools check for common coding flaws: SQL injection, hardcoded secrets, insecure cryptography, buffer overflows, and unsafe function calls. Widely used tools include SonarQube (v10.x, supports 29 languages), Checkmarx One (enterprise, AI-assisted triage), and Semgrep (open source, community rule sets). Most integrate natively into CI/CD pipelines via GitHub Actions, GitLab CI, or Jenkins, so findings appear in the same pull request that introduced the flaw.

The core business case for SAST is timing. Research from the IBM Systems Sciences Institute puts the cost of fixing a production vulnerability at approximately 30 times more than fixing the same issue during development. Finding a SQL injection flaw before it merges is categorically cheaper than finding it after deployment.

The limitations are significant. Commercial SAST tools produce false positive rates between 30% and 50%, with some rule sets running higher. A 2023 Forrester analysis noted that organisations with poorly configured SAST programmes spend up to 40% of developer time triaging false positives rather than fixing genuine vulnerabilities. The underlying problem: SAST has no runtime context. It cannot determine whether a flagged code path is actually reachable, whether a framework sanitises input elsewhere, or whether the vulnerability produces any exploitable effect in your specific deployment.

Most critically, SAST cannot find business logic vulnerabilities. Those require understanding how the application is supposed to behave, not just how the code is structured.

What is DAST?

Dynamic Application Security Testing tests a running application from the outside, simulating how an attacker probes a live target. The tool sends crafted HTTP requests to your application and analyses the responses to identify vulnerabilities: cross-site scripting (XSS), SQL injection (where the payload produces an observable response), insecure server configurations, and exposed error messages.

Common tools include OWASP ZAP v2.14+ (open source, active and passive scan modes), Burp Suite Professional (v2024.x, the industry standard for both manual and automated testing), and Invicti (commercial, "Proof-Based Scanning" that automatically confirms exploitability rather than just flagging patterns).

Because DAST operates at runtime, it finds issues that SAST misses: server misconfigurations, unsafe HTTP response headers, certificate problems, and vulnerabilities introduced by infrastructure rather than application code. It also validates that flaws flagged by SAST are actually exploitable in the running environment, which reduces the false positive burden.

DAST has its own constraints. Without correct authentication configuration, it cannot reach any pages behind a login screen. For most applications, that means the majority of the attack surface goes untested. Crawl-based discovery also struggles with JavaScript-heavy single-page applications where routes load dynamically and require browser rendering to discover. And because DAST tests from the outside, it has no visibility into server-side code paths. It detects only vulnerabilities that produce an observable response.

What is SCA?

Software Composition Analysis scans your application's third-party dependencies, open source libraries, and package manager lock files to identify components with known CVEs.

This matters more than most development teams appreciate. The Snyk Open Source Security Report 2024 found that 84% of codebases contained at least one vulnerability in an open source dependency. The Synopsys OSSRA 2024 report found that 96% of commercial codebases contained open source components, with modern web applications consisting of 60-80% open source code by line count. The attack surface is the entire dependency tree, not just code your team wrote.

SCA tools including Snyk, Dependabot, and Black Duck continuously monitor your dependencies against vulnerability databases (NVD, OSV, GitHub Advisory Database) and alert when a component receives a new CVE. Dependabot raises automated pull requests to update vulnerable packages. Black Duck adds licence compliance tracking for enterprises with open source governance requirements.

PCI DSS v4.0 Requirement 6.3.2 formalises this: organisations processing card data must maintain an inventory of bespoke and custom software and third-party components, with a process to manage vulnerabilities in those components.

SCA runs continuously and integrates into the same CI/CD pipeline as SAST. Unlike SAST and DAST, it does not scan your application's own logic. It only checks whether the libraries you depend on have known weaknesses. A zero-day in a library you have never scrutinised can become your highest-priority vulnerability overnight. SCA is the only layer that catches it automatically.

What is penetration testing?

A web application penetration test is a manual assessment where a qualified security tester actively attempts to compromise your application, using the same techniques a real attacker would use.

Penetration testers do not just run scans. They chain findings together: a low-severity information disclosure finding combined with a misconfigured API endpoint and a weak password policy becomes a path to full account takeover. For applications with separate front-end and API layers, testers assess both surfaces together. Our guide to API vs web application penetration testing explains when and why combined testing matters. They test business logic: can you manipulate a discount code to make orders free? Can you access another user's data by changing an ID in a URL? Can you bypass a payment step by replaying a prior request? None of these are detected by automated tools.

OWASP ASVS v4.0 is explicit on this point. Levels 2 and 3 verification cannot be achieved through automated testing alone. The standard specifically requires manual testing of business logic (V11), authentication (V2), session management (V3), and access control (V4). OWASP's own Testing Guide v4.2 covers 91 individual test cases; automated DAST tools achieve high-confidence coverage on fewer than 40% of them.

The OWASP Top 10 categories that cause the most significant breaches, A01 (Broken Access Control), A04 (Insecure Design), and A07 (Identification and Authentication Failures), are consistently missed or under-tested by automated tools. A tester working through these categories exercises contextual judgment: they know your application's data model, understand what a user should and should not be able to access, and can recognise when an unexpected server response indicates exploitable behaviour rather than a benign anomaly.

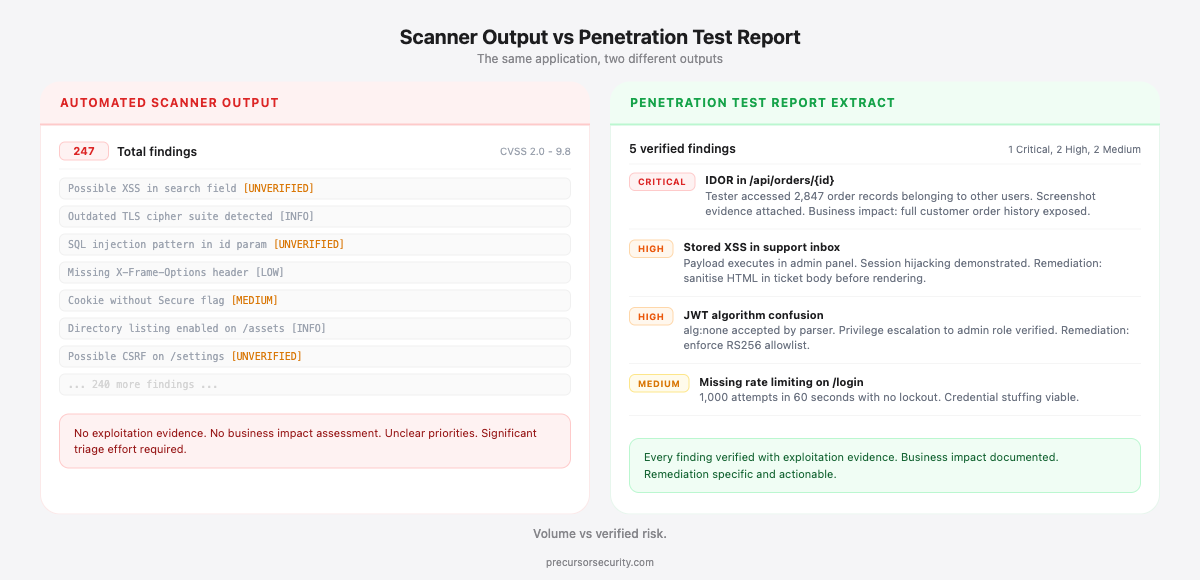

The output of a penetration test is not a list of CVEs. It is a narrative report with exploitation evidence, screenshots or video proof of access, business impact analysis, and prioritised remediation guidance. The gap between DAST and penetration testing is the gap between "this input field might be vulnerable to SQL injection" and "we extracted 45,000 customer records from this input field using this exact payload."

How SAST, DAST, SCA, and penetration testing compare

| SAST | DAST | SCA | Penetration Testing | |

|---|---|---|---|---|

| What it tests | Source code, binaries | Running app from outside | Third-party dependencies | Full app: logic, auth, business flows, chained exploits |

| When in SDLC | Development, CI/CD | Staging, pre-release | Continuously, from dev onward | Before major releases, annually at minimum |

| Speed | Fast (minutes) | Slow (hours per crawl) | Fast (minutes) | Days to weeks |

| False positive rate | High (30-50%+ on commercial tools) | Medium (runtime context reduces noise) | Low (CVE match is binary) | Very low (manually verified exploitation) |

| Business logic coverage | None | None | None | Core capability |

| Authentication coverage | Full (reads all code paths) | Limited (requires configuration) | N/A | Full (tester uses real credentials) |

| Regulatory mandate | NIST SSDF PW.7.2 | OWASP ASVS Level 2+ | PCI DSS Req. 6.3.2 | PCI DSS Req. 11.4, DORA Art. 26, ISO 27001 A.8.8 |

| Cost model | Free (Semgrep) to £20,000+/yr | Free (ZAP) to £15,000+/yr | Free (Dependabot) to £30,000+/yr | £3,000 to £20,000+ per engagement |

| Example tools | SonarQube, Checkmarx, Semgrep | OWASP ZAP, Burp Suite, Invicti | Snyk, Dependabot, Black Duck | CREST-accredited testers |

The key column is business logic coverage. Every automated tool in the table returns "None." That is not a tool limitation that will be resolved in the next release. It is a fundamental constraint of automated testing: scanners test against known patterns, but business logic flaws are unique to each application's specific design.

When should you use each tool?

SAST belongs in your CI/CD pipeline from day one. Every pull request should trigger a scan. Developers get feedback in the environment they already work in and can fix flaws before they merge. The cost per finding at this stage is at its lowest. Configure meaningful quality gates: block on high-severity findings, warn on medium, suppress accepted-risk items to control false positive noise.

DAST belongs in staging, where your application runs in an environment that mirrors production but does not carry live data. Running DAST against production risks data corruption and denial-of-service conditions from active payload injection. Integrate it into pre-release checks so no deployment ships without a baseline scan of your live endpoints.

SCA should run continuously. Dependency vulnerabilities are not introduced by your team, so they are not triggered by a code commit. A library you installed six months ago may receive a critical CVE tomorrow. Dependabot and Snyk watch your dependency tree around the clock and raise alerts when that happens. The average time to fix a known open source vulnerability is 49 days (Snyk data); critical CVEs in widely used packages are typically exploited within days of disclosure.

Penetration testing should run at least annually and before any major release, infrastructure migration, or feature launch that materially changes your attack surface. For applications handling payment card data, PCI DSS v4.0 Requirement 11.4 mandates annual web application penetration testing. For regulated financial services within scope of DORA, Article 26 requires threat-led penetration testing. Most mid-market organisations run annual tests with additional targeted engagements around significant product changes.

These are complementary layers. Replacing one with another leaves a gap. A SaaS platform that relies on DAST and skips penetration testing may pass automated scans with no critical findings, then fail a pentest that finds a checkout flow manipulation paying out to attackers rather than charging them. The DAST scanner tested the discount field for injection patterns. It had no mechanism to test whether the business logic of the checkout flow produced unintended monetary outcomes.

Why penetration testing is the validation layer

Automated tools flag possibilities. Penetration testers prove exploitability.

SAST outputs a list of potential vulnerabilities in your code. DAST outputs a list of potential vulnerabilities in your running application. Both lists contain false positives. Both miss entire vulnerability categories. Neither tells you whether the findings are exploitable in your specific environment, what the actual business impact would be, or whether an attacker could chain multiple low-severity findings into a critical breach path.

Penetration testing does all three. A tester does not stop at "SQL injection detected in the search field." They extract data, demonstrate the scope of access, and document exactly what a real attacker would obtain. That proof changes remediation priorities. A scanner report with 200 findings of varying severity, many unverified, makes prioritisation genuinely difficult. A pentest report with five findings, each backed by exploitation evidence and a documented business impact, drives action.

Verizon's DBIR 2024 found that web application attacks remain the top attack vector for confirmed data breaches, with the majority involving authentication failures and access control weaknesses. These are precisely the categories that automated tools under-test. A 2022 study published in the Journal of Computer Security found that automated tools missed 85% of business logic vulnerabilities in tested applications.

NIST SSDF PW.8.2 addresses this directly: software should be thoroughly tested in representative environments using both automated and manual methods prior to release. Automated scanning satisfies the "automated" component. Manual penetration testing satisfies the rest.

Which combination does your organisation need?

The right stack depends on your maturity level, risk profile, and compliance obligations.

Early-stage or startup. Your development team is small, your codebase changes quickly, and security tooling competes with feature work for attention. Start with free tools: Semgrep (SAST) and Dependabot (SCA) integrate with GitHub in under an hour. Add OWASP ZAP for pre-release DAST scans. Commission an annual penetration test before your first major customer or compliance conversation. This combination costs under £5,000 per year and addresses your most critical gaps.

Growing company (50 to 500 employees). You have dedicated development and IT teams, handle customer data, and face increasing compliance pressure. Move to a commercial SAST tool (SonarQube or Checkmarx) with enforced quality gates in your pipeline. Add Snyk for SCA with container scanning. Run DAST in staging on every release cycle. Commission annual penetration testing, with additional targeted tests around major product changes. Budget: £15,000 to £40,000 per year across tooling and testing.

Enterprise. You operate at scale, carry regulatory obligations (PCI DSS, ISO 27001, DORA, NHS DSPT), and run multiple web applications and APIs. You need all four layers running continuously, a formal application security programme, and a penetration testing cadence that matches your release velocity. PTaaS (Penetration Testing as a Service) models provide continuous testing alongside point-in-time annual assessments. Precursor Security offers CREST-accredited web application penetration testing for UK-regulated organisations that need auditable, compliant assessments.

The common mistake at every maturity level is treating these tools as alternatives. Teams that run DAST and skip penetration testing believe they are covered until a tester demonstrates otherwise. Teams that run penetration testing and skip SAST introduce the same vulnerability classes faster than they can remediate them. The full stack is not an enterprise consideration only. It is the minimum viable programme for any organisation that takes web application risk seriously.

For detail on the specific vulnerability categories penetration testers work through, see our guide on the OWASP Top 10: what penetration testers actually look for.

Frequently Asked Questions

What is SAST and DAST and SCA? SAST (Static Application Security Testing) scans source code for vulnerabilities before the application runs. DAST (Dynamic Application Security Testing) tests a live application from the outside to find runtime vulnerabilities. SCA (Software Composition Analysis) scans third-party dependencies for known CVEs. Together with manual penetration testing, they form the four main layers of web application security testing.

What is the main difference between SAST and DAST? SAST analyses your source code without running the application, finding coding flaws early in development. DAST tests a running application from the outside, finding issues like misconfigurations and injection flaws that only appear at runtime. SAST has higher false positive rates (30-50%) because it lacks runtime context. DAST has limited visibility into authenticated areas and cannot test business logic. Most application security programmes use both.

Is Nessus a DAST scanner? No. Nessus is a network and infrastructure vulnerability scanner designed to identify known CVEs in operating systems, services, and network configurations by matching detected software versions against the NVD database. Nessus Expert added limited web application scanning in recent versions, but it does not match the depth or breadth of purpose-built DAST tools such as OWASP ZAP, Burp Suite, or Invicti. Use Nessus for network-layer vulnerability scanning and a dedicated DAST tool for application testing.

Can SAST or DAST replace penetration testing? No. SAST and DAST find known vulnerability patterns through automation. Penetration testing finds what automated tools cannot: business logic flaws, authentication bypasses, chained exploits, and IDOR vulnerabilities. Research indicates automated tools miss approximately 85% of business logic vulnerabilities. Automated scanning reduces noise and addresses known patterns; penetration testing validates whether an attacker can actually breach your application.

How often should web application penetration testing be done? At minimum annually, and before any major release that materially changes your attack surface or authentication flows. PCI DSS v4.0 Requirement 11.4 mandates annual web application penetration testing for organisations that process card data. DORA Article 26 requires threat-led penetration testing for in-scope financial entities. High-risk applications in financial services, healthcare, and consumer data should test more frequently. Automated SAST and DAST scans should run on every CI/CD cycle regardless of penetration testing frequency.